CSapp-Chapter1

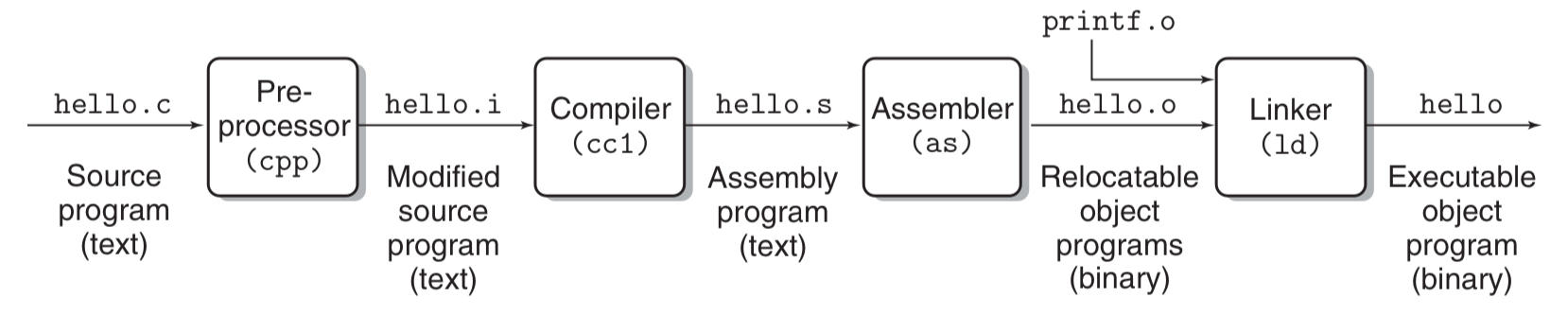

1.1 Lifetime of hello.c:

This book begins its study of systems by tracing the lifetime of the hello program, from the time it is created by a programmer, until it runs on a system, prints its simple message, and terminates.

hello.c :

1 | |

Lifetime of hello.c:

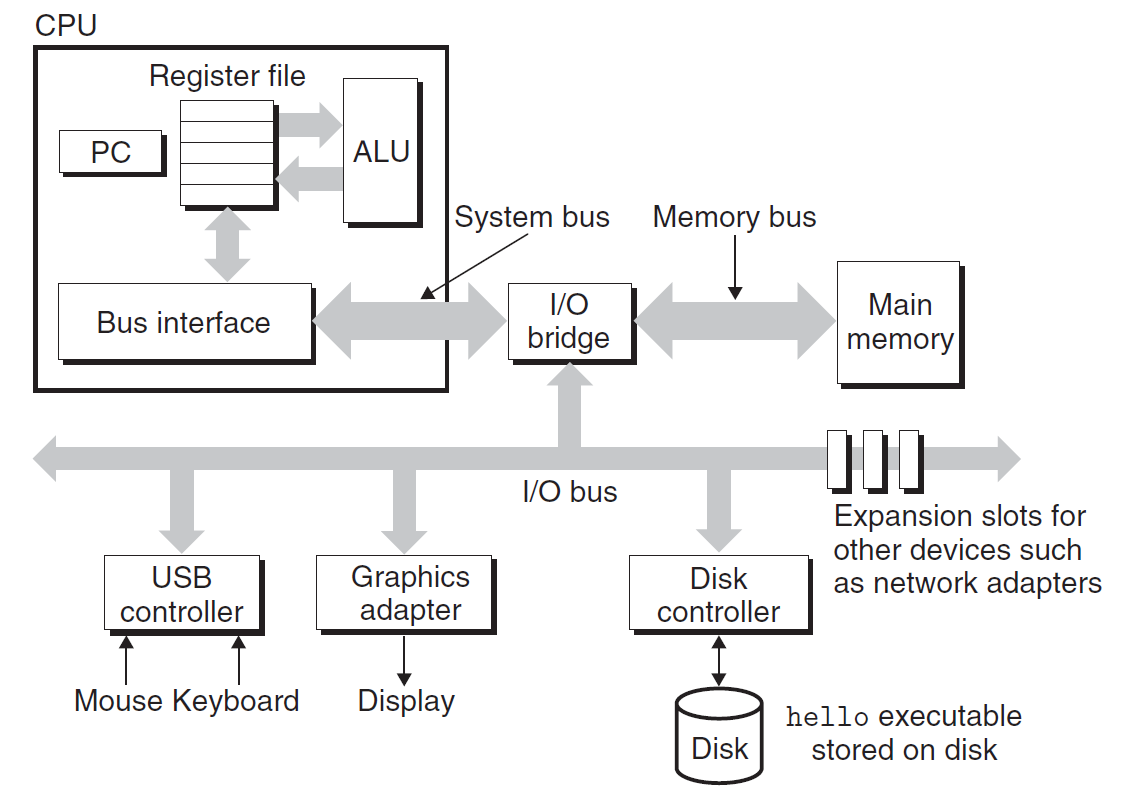

1.2 Hardware

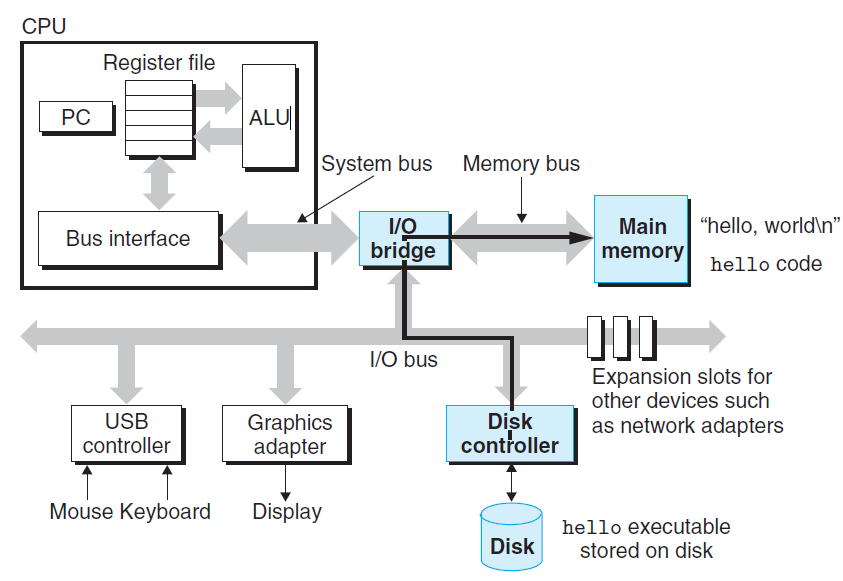

Hardware organization of a typical system:

Buses: a collection of electrical conduits running through the system.

Main Memory: a temporary storage device consisting of a collection of dynamic random access memory(DRAM) chips.

Processor: Centural Processing Unit(CPU), is the engine that interprets (or executes) instructions stored in main memory. At its core is a word-sized storage device (or register) called the program counter (PC).

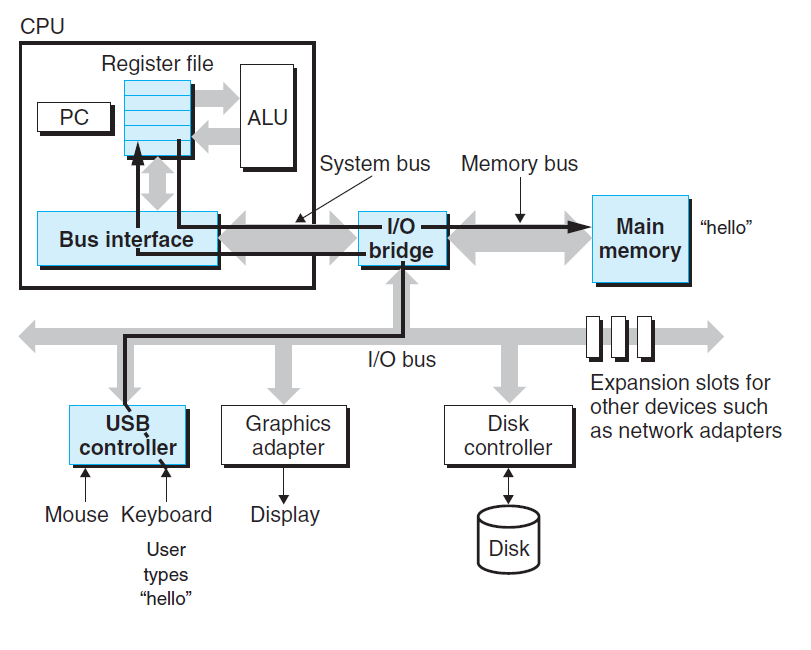

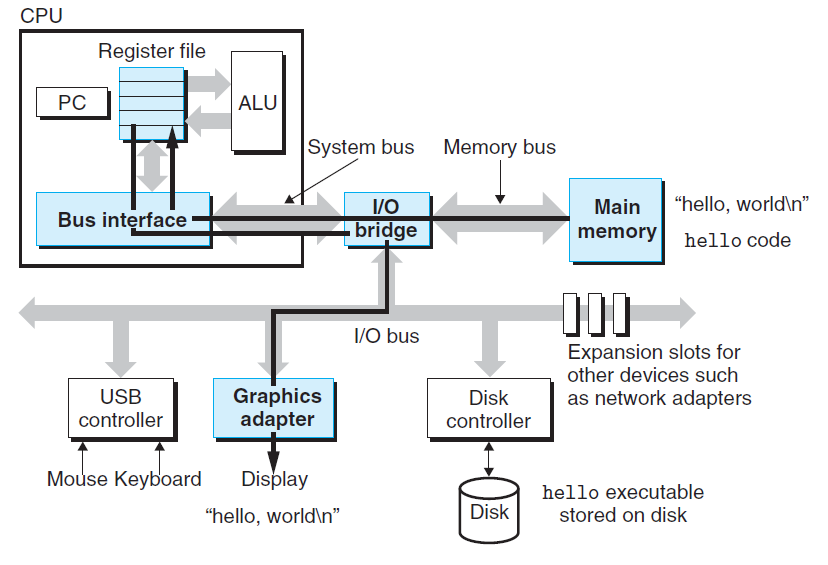

1.3 Runing the hello programme

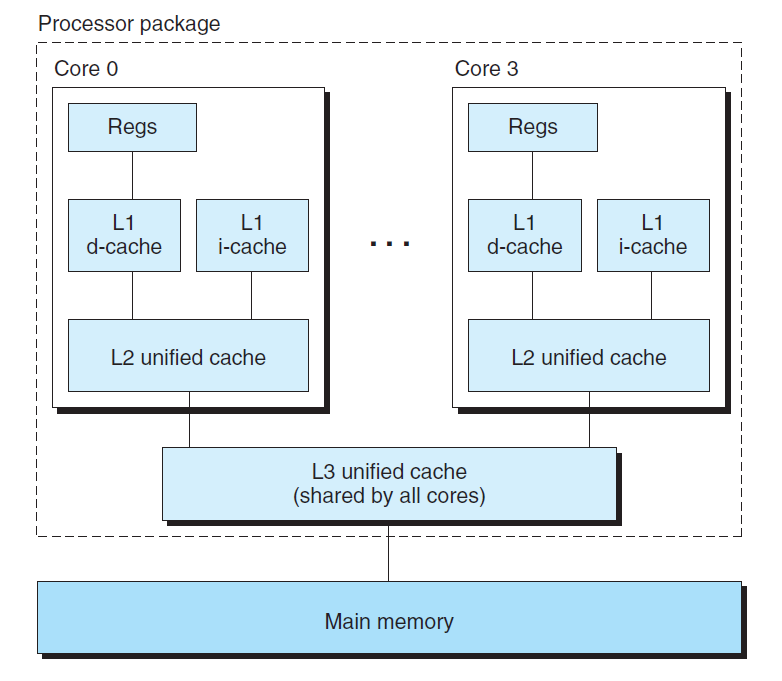

Cache: Because the processor can

read data from the register file almost 100 times faster than from memory. To deal with the processor-memory gap, system designers include smaller faster storage devices called cache memories.- L1 Cache : holding tens of thousands of bytes and can be accessed nearly as fast as the register file, using static random access memory (SRAM).

- L2 cache : hundreds of thousands to millions of bytes is connected to the processor by a special bus, using static random access memory (SRAM).

- L3 cache : It is specialized memory developed to improve the performance of L1 and L2. L1 or L2 can be significantly faster than L3, though L3 is usually double the speed of DRAM.

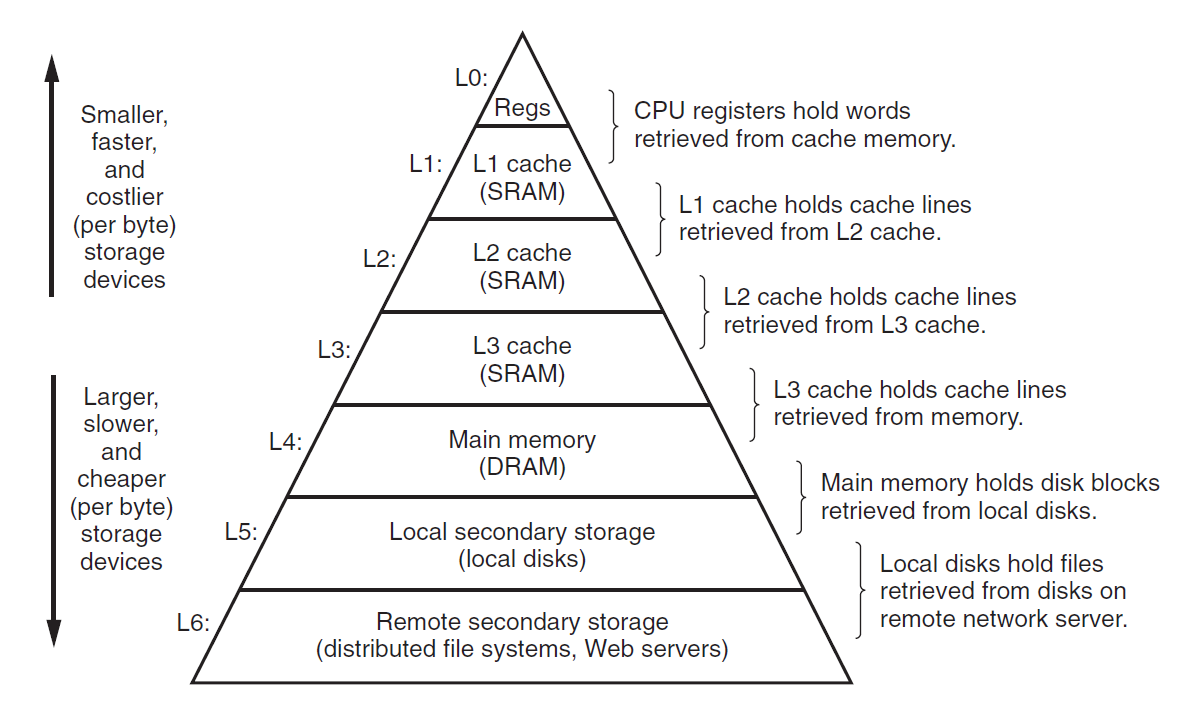

1.4 Storage Devices Form a Hierarchy

The main idea of a memory hierarchy is that storage at one level serves as a

cache for storage at the next lower level.

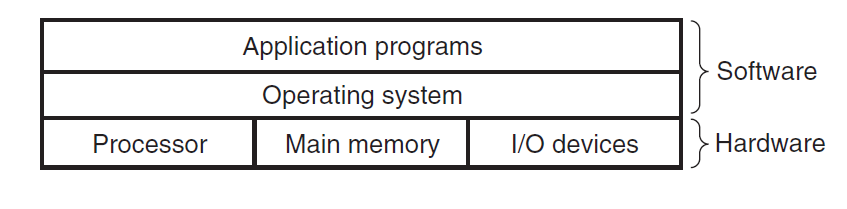

1.5 The Operating System Manages the Hardware

Operating System works as a bridge between application programs (hello program). and hardware (keyboard, display, disk, or main memory).

The operating system has two primary purposes:

- To protect the hardware from misuse by runaway applications.

- To provide applications with simple and uniform mechanisms for manipulating complicated and often wildly different low-level hardware devices.

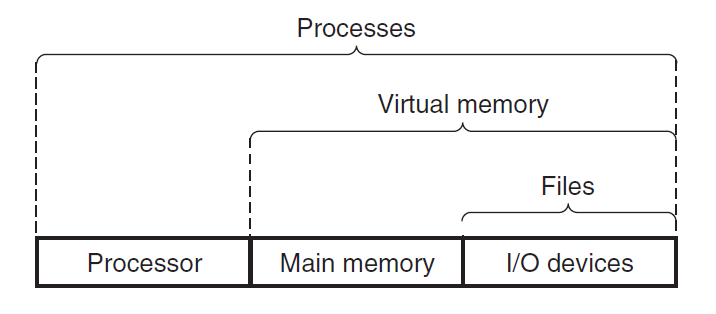

The operating system achieves both goals via the fundamental abstractions shown bellow: processes, virtual memory, and

files.

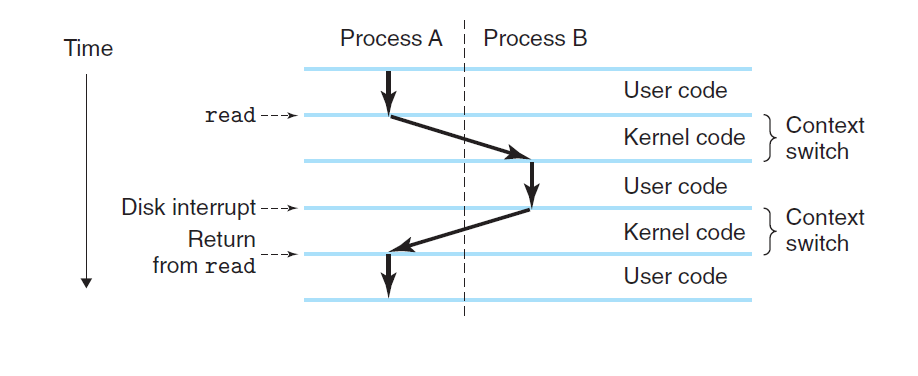

Process: A process is the operating system’s abstraction for a running program. Multiple processes can run concurrently on the same system, and each process appears to have exclusive use of the hardware. At any point in time, a uniprocessor system can only execute the code for a single process.When the operating system decides to transfer control from the current process to some new process, it performs a context switch.

Threads :Although we normally think of a process as having a single control flow, in modern systems a process can actually consist of multiple execution units, called threads, each running in the context of the process and sharing the same code and global data.

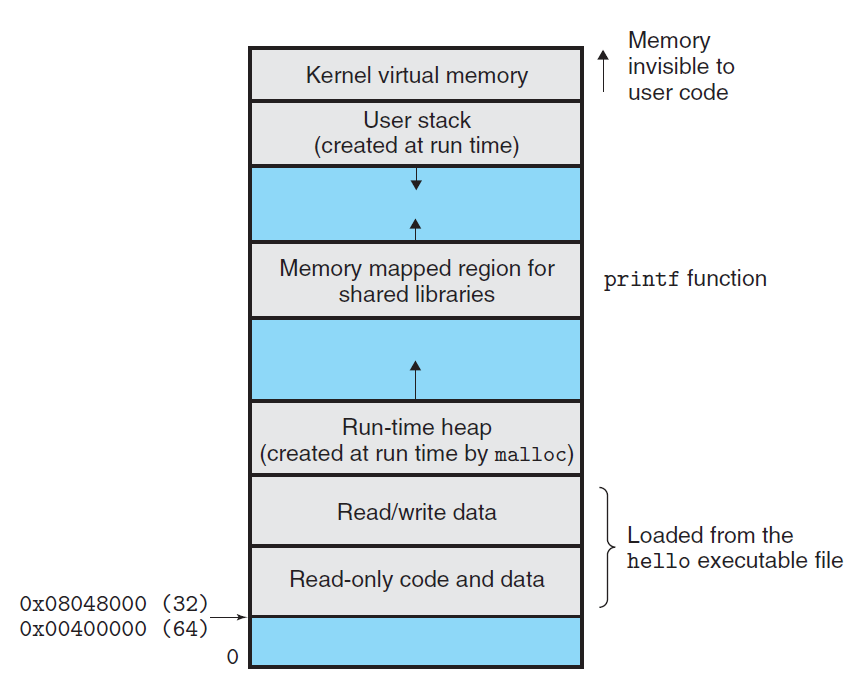

Virtual Memory: It is an abstraction that provides each process with the illusion that it as exclusive use of the main memory. Each process has the same uniform view of memory, which is known as its virtual address space. The basic idea is to store the contents of a process’s virtual memory on disk, and then use the main memory as a cache for the disk.

- Program code and data: Code begins at the same fixed address for all processes, followed by data locations that correspond to globalCvariables.

- Heap: It expands and contracts dynamically at run time as a result of calls to C standard library routines such as malloc and free.

- Shared libraries: Such as the C standard library and the math library.

- Stack: Where the compiler uses to implement function calls.Like the heap, it expands and contracts dynamically during the execution of the program.

- Kernel virtual memory: The kernel is the part of the operating system that is always resident in memory.

- File: It is a sequence of bytes.

1.6 Concurrency and Parallelism

Concurrency: It refers to the general concept of a system with multiple, simultaneous activities.

Parallelism: It refers to the use of concurrency to make a system run faster.

Three levels of abstraction in a computer system:

Thread-Level Concurrency: The use of multiprocessing can improve system performance in two ways.

- First, it reduces the need to simulate concurrency when performing multiple tasks.

- Second, it can run a single application program faster, but only if that program is expressed in terms of multiple threads that can effectively execute in parallel.

graph LR; Uniprocessor System --> Multiprocessor System --> Multi-core Processors & Hyperthreading --> Simultaneous Multi-threading style Uniprocessor System fill:#D4EFFC,stroke:#5BCAF5 style Multiprocessor System fill:#D4EFFC,stroke:#5BCAF5 style Multi-core Processors fill:#D4EFFC,stroke:#5BCAF5 style Hyperthreading fill:#D4EFFC,stroke:#5BCAF5 style Simultaneous Multi-threading fill:#D4EFFC,stroke:#5BCAF5

Instruction-Level Parallelism: At a much lower level of abstraction, modern processors can execute multiple instructions at one time, a property known as instruction-level parallelism.

Single-Instruction, Multiple-Data (SIMD) Parallelism: At the lowest level, many modern processors have special hardware that allows a single instruction to cause multiple operations to be performed in parallel, a mode known as single-instruction, multiple-data, or “SIMD” parallelism.

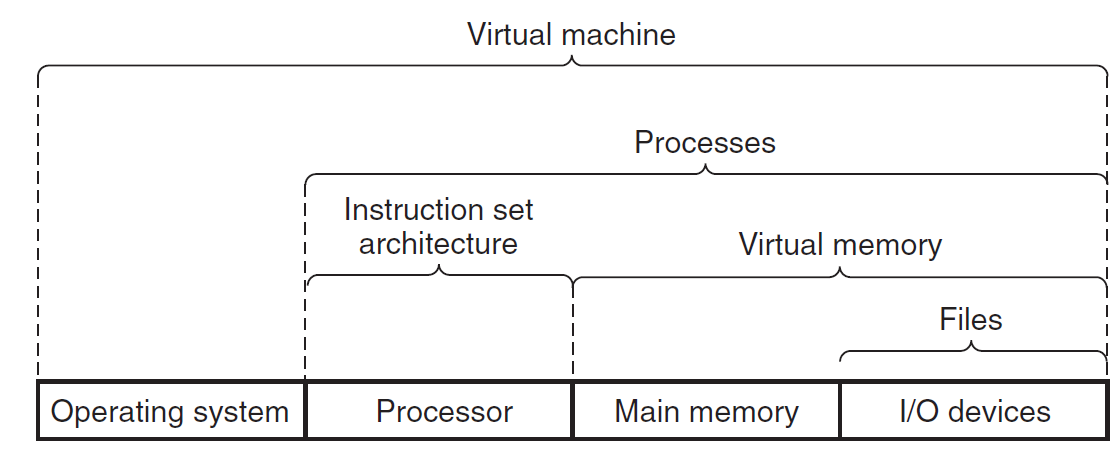

1.7 Some abstractions provided by a computer system:

- Files as an abstraction of I/O

- Virtual Memory as an abstraction of program memory

- Processes as an abstraction of a running program.

- Virtual Machine as an abstraction of the entire computer

- Virtual Machine as an abstraction of the entire computer

Thanks for reading!